Does this scenario sounds familiar:

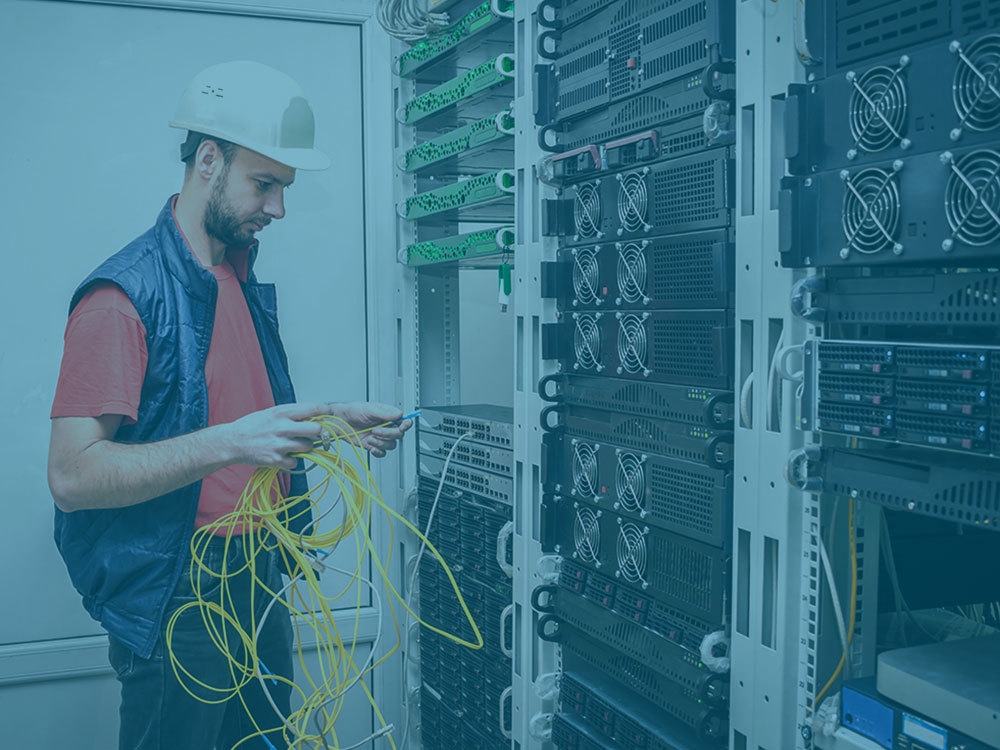

You had a plan and a strategy when you were putting your data center together or reorganizing it. It was beautiful, organized, streamlined. The system was efficient and so was the power consumption. Fast forward 6 months and your company’s computing requirements have exponentially increased. Your once well organized data center or network closet, through no fault of its own, has become Frankensteined. An extra rack here and a few more cables there. Things were once labeled well, but now at least one of those labels doesn’t correspond to hardware that exists anymore. Now you’re getting notices of heat spikes and system inefficiencies. It’s time to increase cooled air to the room right?

If any of this sounds a little familiar, we want to help you find space within your racks, avoid buying unnecessary equipment, and save money on your cooling costs.

As computing needs grow, cooling concerns can fall by the wayside in favor of supply. With a little extra planning, you can increase computing power, plan for cooling and energy, and keep your system organized and manageable.

Many times with small adjustments or a small investment, you don’t need to increase cooling capacity, but make smarter decisions with the components inside the rack.

Not Too Hot, Not Too Cold Inside the Racks

Before we get to that let’s talk about the optimal cooling temperature for the data center. As we work to maximize efficiencies inside the rack, what is the most efficient temperature for your data center to operate?

It’s a common misunderstanding that data centers should be kept freezing cold. Most IT equipment manufacturers for small to medium sized data centers recommend an intake temperature of 77 degrees Fahrenheit.

Running for long periods of time at 90 degrees Fahrenehit will shorten the lifespan of the equipment.

Keeping the server room below 77 degrees Fahrenheit doesn’t increase efficiency in the equipment. Meaning the cooling is an unnecessary expense and waste of energy.

Above 77 degrees Fahrenheit, the equipment can run. But the increased speed and power consumption of the equipment counteracts energy and financial savings.

Only cooling the room through the air conditioning system, without looking at the overall power and cooling strategy, can also lead to a waste of energy and money.

Cooling the Room

Sending more cold air to the room through the building’s HVAC system or through a CRAC (computer room air conditioner), may not be the best long term solution for keeping your data center cooled and running efficiently.

The first step we would recommend is a site audit of your data center or server closet. This will give us an understanding of the heating and cooling inefficiencies within your space.

Instead of pumping more cool air into the place, we need to find ways to separate the hot air from the cool air. IT equipment is going to constantly give off hot air. The objective is to find ways to direct the hot air away from the equipment. Otherwise the hot air will run into the cool air and only increase the temperature of the cool air.

Like water, hot air seeks the path of least resistance. Simple solutions can make a big difference to redirect the heat. If servers and racks are mounted with too much open space around them, the hot air will recirculate and increase intake air temperature. Even in a chilly room this could cause the servers to overheat and shut down. Organizing the inside of data center racks will help this.

Inside the Rack

There are techniques for setting up your equipment in the room itself. But let’s talk about some lower cost strategies for setting up things inside the racks. From cable management to new efficient PDUs to adding blanking panels, we’re going to talk about these simple solutions for keeping your system cool without increasing the energy bill.

Sometimes the small adjustments can have the biggest impact on the overall efficiency.

Power Distribution Inside the Rack

The beauty of racks is that they are customizable with whatever manufacturers equipment you want. The downside of the rack is that you can customize them with whatever equipment you want.

Meaning you can add whatever server or power source you want or whatever is available, inexpensive, or needed at the time.

You may have different power supply connections within the same rack that requires different power inputs. Doubling the amount of equipment within the rack. Not only is this an inefficient use of space, it creates disorganization and a higher potential for human error.

Universal Power Supply

A new solution that we’ve found and are really excited about is Vertiv’s new uPDU.

Vertiv designed the uPDU as a universal power supply. No matter the type of connection, the uPDU can adapt to it. Vertiv also designed it for worldwide use, so whether you’re outfitting a data center in Sydney, Australia, or Kansas City, Missouri, you can use the uPDU.

With more data center managers working from home, we appreciate that it offers the ability to monitor remotely. You can even monitor power consumption at the outlet level.

If you have an outlet level managed or switched PDU’s, you will be able to turn “On” or “Off “receptacles remotely. This allows you to control the power consumption without authorization.

Visualizing a power one line in a DCIM tool or a printed schematic allows you to approve power usage at a specific receptacle. Then you can turn that one receptacle “On” when requested.

The uPDU is compact. Depending on what you need in the rack, it can be mounted vertically or horizontally. This keeps things organized and open, so the heat can redirect out the back and away from the equipment.

For a more in-depth look at the uPDU, Vertiv put together a couple videos:

Cable Management Inside Data Center Racks

The often overlooked cable management can be an effective way to keep things cooler inside the rack and give you less headaches along the way.

Proper cable management can benefit you in all sorts of ways.

- Prevent Cable Damage

If the cables are damaged, this could cause data transmission problems and potential system downtime. - Easier to Scale Up

Having an organized internal system makes it easier to integrate new equipment as computing needs grow. - Cooling Efficiencies

Keeping the cables organized and away from critical airflow allows hot air to move away from the equipment.

Cable Management Inside the Racks

Here are a few ideas to keep in mind before you begin:

Have a Plan

The obvious place to start. Know the types of cables you have to plan for and whether the cables will enter from the top or the bottom of the rack.

Identify or Label the Cables

To know at a glance what you’re looking at, use colored cables or cable managers. You may also consider creating a cheat sheet and affixing it inside the rack. Before you start do some research about the type of cable managers that will benefit your system the best.

Secure and Retain Cables

Use cable ties or cable managers to reinforce cables on tricky areas. If the cables are going to be coming in contact with sharp edges or heated areas, they need to be protected.

Cable Management Tools

As you’re putting together your plan, you’ll have a wide range of cable management tools to choose from. Do some research and test options to find ones that will be the most efficient for you to use. Here are a few examples tools that might work for your system:

Lacer Bars

These basic metal bars act as supports for cables. Lacer Bars allows the cables to spread out, increasing the airflow within the rack. You can also use lacer bars to keep electrical cables away from other lines, which will help cut down on signal interference.

D-Rings

D-Rings, or distribution rings, are made of either metal or plastic. You’ll choose based on whether you need sturdiness or flexibility when managing your cables. There are horizontal and vertical variations depending on how you need them to be oriented within the rack or even a wall.

Cable Spools

When you have excess cable that you want to keep from getting tangled, you’ll want to use a cable spool. These simple plastic pieces are designed to fit within the radius of a cable bend to prevent the cables from being damaged.

Vertical Cable Managers

These are created for cables that need to run the length of the rack. Vertical cable managers have wide openings and are able to accommodate large numbers of cables. They are designed with “fingers” to handle a standard cables’ bend radius without causing any damage.

Horizontal Managers

There are several different types of horizontal managers. One of the most common varieties uses built-in D-rings to secure cables. If you need to access cables more frequently, the D-ring version might be the better option for you. Another variation, like the vertical cable managers, use cable trays with finger ducts. This is sturdier than the D-rings but the cables are more difficult to access. So if you’re intending this to be a permanent set-up, a horizontal manager with cable trays and finger ducts might be the best option for your system.

Blanking Planels

Another simple and often overlooked solution inside data center racks are blanking panels.

Prevent Hot Air Recirculation

The goal for keeping the equipment cool is to direct the hot air away from the equipment. The equipment is designed for air to flow through the front of the equipment and out the back.

When you’re choosing racks, you’ll have an option between open racks or enclosures. The open rack systems can be helpful for reaching equipment and making updates. You might think that the open sides or even perforated panels would be better for dissipating heat around the equipment. But in reality they offer very little control over air flow.

Choose the enclosed racks or install blanking panels. This seemingly insignificant is critical in directing the air towards the path of the least resistance – out the back and away from the equipment. Using blanking panels is more efficient and cost effective than pumping cool air into the room.

To Conclude

To recap our “Inside the Rack” series, as computing needs increase for data centers, there’s also an increase in racks and equipment to meet the demand. This means an increase in heat as well. Without a strategy in place, this could cause overheating and the potential for downtime within the data center.

Data Center equipment is designed to operate efficiently at 77 degrees Fahrenheit. It’s a waste of energy and money to keep it any cooler than that. Pumping the room with cold air – even if it’s at 77 degrees – without redirecting the hot air away from the equipment is still a waste of money. So it pays to focus on efficiencies inside the rack.

Finding ways to consolidate and organize within the racks can lead to large savings within the data center. Areas that should be a part of your strategy are the power management, cable management and how you’re using the space around the rack.

If you’re looking for help creating your strategy inside data center racks, give us a call at 877.592.4259 or contact us through the website.